Earlier this year we partnered with AARP to field a first-ever experimental qualitative study to test whether we could use new generative AI tools to either assist or replace facets of our market research methodologies, and to compare how the AI solutions stacked up next to results produced by human beings. To do this we conducted a research project to explore the impact of current economic uncertainty, inflation, and job market dynamics on Americans aged 50+, using both traditional research methods and new generative AI solutions.

The Process

The process of this study involved three different approaches: Traditional (only Humans), Hybrid (a mix of Humans and AI), and Fully Automated (only AI).

- The Traditional approach involved in-depth interviews with 10 human respondents and human analysis;

- Hybrid involved using AI-assisted analysis of the transcripts from the interviews with human respondents;

- Fully Automated involved using AI as both the respondents (Persona or Cohorts) and the analyst.

We used primarily ChatGPT-4 given its public availability, although we included Google’s BARD as soon as it was made publicly available.

Key Takeaways

We’ll cover four main takeaways that we learned through this experience: (1) it’s all about the prompt, (2) preparation + frameworks are key, (3) immediate use cases and examples, and (4) humans are still required.

With the use of AI in the Hybrid approach, we explored ways to summarize and analyze respondent answers by pulling information from the available interview transcripts — we prompted AI to create lists of the health concerns people mentioned, to rank those concerns in order of significance, and to create short summary statements. It took some trial and error to figure out how to best word the prompts in order to get a specific, useable response from AI. Here are some tips we learned about the importance of prompts:

- “Explain your logic.” Ask AI to explain its logic, to better understand why it responded the way it did — it will also help you to see some of the stereotypes that are in the data that AI has been trained on.

- “Limit prose.” AI can sometimes get wordy, so ask it to be concise and limit the prose.

- “Only refer to ideas you have already shared.” Don’t let it pull in new ideas or make something up — this helps.

- Challenge the things that don’t make sense. AI can still make mistakes! If something doesn’t sound right, challenge it. Figure out why it’s wrong.

- Always provide context. To get accurate analysis, provide the context needed for each prompt or question.

- Be specific and layered (details matter).

- Values laddering works! (More on this later).

The Fully Automated process involved asking the same interview questions to both the human participants and the available generative AI tools, using the same approaches and techniques to extract the same insights. We asked ChatGPT to roleplay as certain personas that correlated to real human counterparts, and then we wrote a prompt that included the demographic information of the human that ChatGPT needed to act like. For example, after we interviewed Alexa (a real human respondent), we asked ChatGPT to act as Alexa, and included her demographic information in the prompt: a 53-year-old white female, living in the suburbs, making $75,000-$100,000 yearly as a self-employed worker with a bachelor’s degree. We then asked ChatGPT the same questions that we had asked the real Alexa and compared the answers — some of which ended up being very similar, especially in the values laddering exercise.

Values Laddering With AI

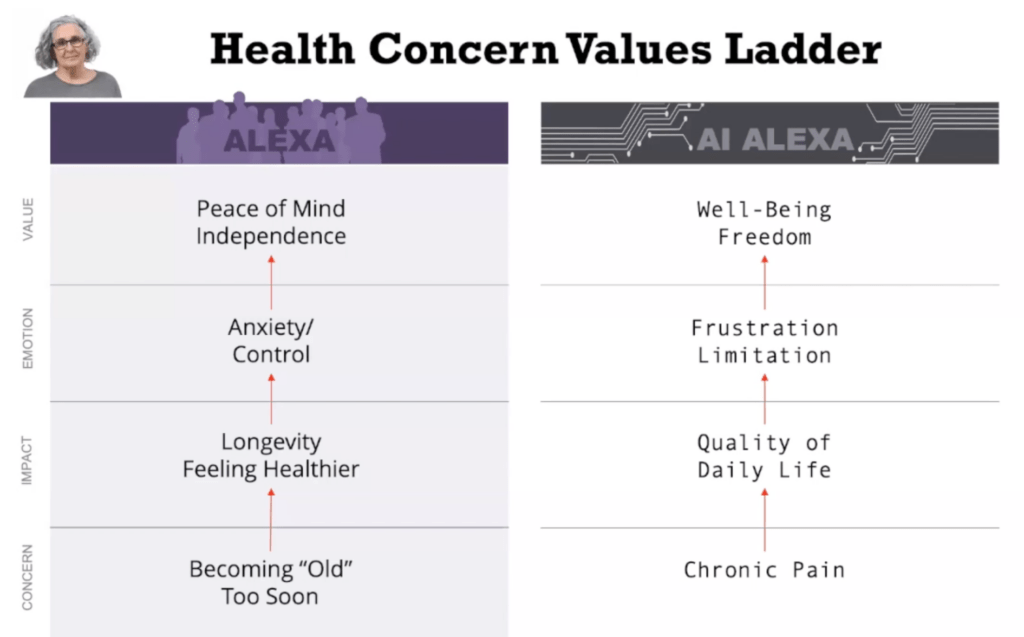

Values laddering is a way to understand decision making and personal relevance that link the most important attributes of a choice to rational and emotional benefits that motivate decisions. With this exercise, we often uncover core values and key emotional benefits. This is extremely important in developing strategy, as they tend to be the hinge between the more rational components of the consideration and the driving motivation of decisions.

The chart below shows the comparison of values laddering between the real human respondent and the AI respondent (who was roleplaying as “Alexa”). We start with asking what your main concern is, then ask what impact that concern has on your life, then what emotion does that make you feel, and then finally end up with the value that drives your decision making on issues related to this concern. You’ll see that the responses between the human and AI are similar, which in this instance, demonstrates that AI can be effective in generating human value maps.

Sharing Our Findings

Maury Giles, our Chief Growth Officer, was in Austin, TX last week to present the findings of this pioneering research at the annual IIEX North America conference along with Patty David, Vice President of Consumer Insights at AARP.

The Insights Innovation Exchange (IIEX) is an annual conference for industry visionaries and brand leaders to join engaging sessions and roundtables to explore the latest technologies, methodologies, and emerging trends driving the future of market research and consumer insights. With dynamic discussions on topics like tech-driven market research, the application of generative AI, and making insights actionable, the event helps discover their industry’s upcoming best practices.

“Our team did a great job with the AARP/AI presentation,” said Marjie Sands, Group Director at Heart+Mind, who attended the conference. “Seeing the presentation on the big screen made the whole research project come to life and it seemed to impress the attendees, as I saw a lot of cameras out taking pictures.”

Giles and David also presented these research findings at the Insights Association Annual Conference in April, an event intended to examine four important disciplines — Qualitative Research, Experience Management, Data Analytics/Quantitative, Behavioral Research — and within each explore emerging trends, quality advancements, DEI progress, tangible business impact, and more.

Conclusion

With this project, we discovered effective ways of leveraging AI to improve and evolve insights generation work among market researchers. The important thing about using AI in market research is not to rely on AI to tell you the answers or to replace human respondents — but instead to challenge your thinking and to come up with new ideas.

“You’re going to use [AI] to better think about it, and then leverage it with your thinking and other tools to determine how it applies,” said Maury Giles, our Chief Growth Officer. “The human is still in charge.”

If you want to learn more about information from this study and what we learned, reach out to Maury Giles.